Why AI will kill some occupations and leave others strangely intact — and why the difference has nothing to do with what a machine can do

In November 2016, computer scientist Geoffrey Hinton told a Toronto audience that medical schools should stop training radiologists. AI, he declared, would soon outperform them at reading scans. Ten years later indeed the task of reading x-rays and MRI scans is done more and more by machines. At the same time, in the US both the number and the salary of radiologists has increased substantially over the last decade.

AI has taken over some tasks (reading images), but that has not destroyed the job (radiologist). Economist Luis Garicano (LSE) and coauthors make sense of this very phenomenon in a recent research paper. The main message can be summarized in this slogan: the labour market prices jobs, not tasks. The structure of a job — how tightly its component tasks are bundled together — turns out to matter more than the raw amount of AI exposure. Their research paper title is very meaningful in this sense: Weak Bundle, Strong Bundle.

The Exposure Fallacy

The dominant framework for thinking about AI and employment counts the fraction of tasks within an occupation that AI can perform and reads that fraction as a “displacement risk.” More exposure implying more danger to be substituted away by AI.

This conjecture is missing a major point: a radiologist does not just sell image classification. She triages cases, communicates with referring physicians, trains residents, takes difficult decisions, and signs (i.e. takes personal responsibility of) diagnoses that others will then act on. The market buys a bundled service. If AI can now classify the image, nothing necessarily changes — unless the classification task can actually be peeled off and handed to a machine while the rest of the job continues without it.

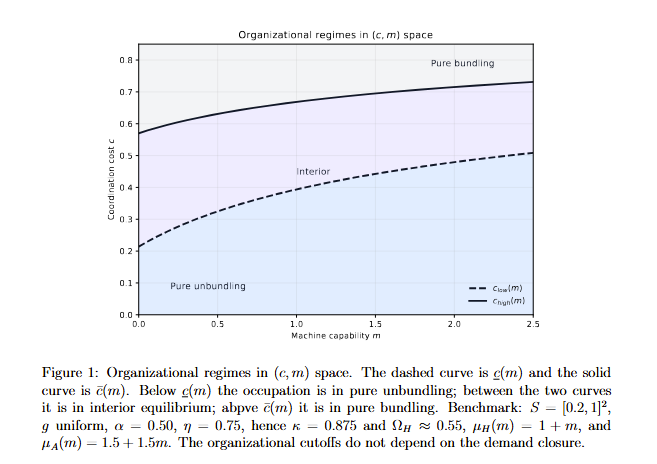

Garicano, Li and Wu call the cost of peeling tasks apart the coordination cost. It is the fraction of output value destroyed when two tasks that used to live inside one job are instead performed by separate agents. When c is low, you have a weak bundle. When c is high, you have a strong bundle. The AI capability of the machine never changes. The job outcomes do. As shown in Figure 1, for high coordination costs / low machine capability tasks tend to be bundled into a single job, while the single tasks composing a job are “unpacked” and split when machine’s capability is high and/or coordination costs arising from splitting the tasks is small.

Figure 1. Organizational regimes as AI capability rises. Below the dashed curve every worker prefers AI-assisted unbundling; above the solid curve every worker stays bundled. Better AI shifts both curves upward — expanding the pure unbundling zone. Source: Garicano, Li & Wu (2026).

Three Steps and One Mechanism

This equilibrium can be understood in three steps.

First: bundled workers keep a bigger slice of the pie. In competitive equilibrium, a worker who supplies both tasks in a bundled job earns revenue from both tasks. Workers whose job is stripped down to only one of the original tasks see their revenue (i.e. the salary) fall. The labor share narrows not because wages were cut per se, but because the job boundary moved, with a larger share of tasks being performed by “machines” (capital) and a smaller share being performed by workers (labor).

Second: unbundling triggers a capacity shock. In a bundled job, the worker splits her time between task 1 (the codifiable bit — drafting, classifying, calculating) and task 2 (the relational, contextual, or physically presence bit). That time split is a structural bottleneck. The moment AI takes over task 1 autonomously, the worker reclaims every minute she used to spend on it and pours it into task 2. Each surviving worker can now produce more task-2 output than she did before — not because her skill improved, but because she stopped doing something else.

Third: inelastic demand turns the capacity shock into displacement. If every surviving worker suddenly produces more, increased supply floods the market. If demand for the service is sufficiently inelastic — think legal filings, medical procedures, accounting engagements — the price falls. The price falls enough that the least-productive workers can no longer cover their outside option and leave the market (the profession). This is the task-substitution mechanism already developed by Nobel-prize winner Daron Acemoglu in his works with Pascual Restrepo (2018, 2019), but with a crucial condition attached: this mechanism is triggered only in “weak-bundle” occupations. In strong bundles, the job absorbs AI as an internal assistant, the capacity shock never arrives, and employment barely moves.

What Makes a Bundle Strong?

The key parameter is the coordination cost c. The paper identifies three empirically legible determinants of this cost: shared costs, liability and cross-task learning. When the same professional who reads the scan is also the one who knows the patient’s history, spoke to the family, and will explain the findings to a junior doctor, splitting those roles destroys information that neither party separately possesses. This implies that splitting the tasks would duplicate some shared costs now bundled together in the single job.

Secondly, in many professions it is the professional signature that makes the service valid and worth its price: a diagnosed condition, a legal opinion, a fiduciary recommendation. Autonomous AI cannot simply absorb the task without the service losing its contractual value. The liability link keeps the bundle intact. Lawyers, accountants and doctors are not protected by mystical guild-like barriers to potential entrants; they are protected by the legal architecture that requires a named human to take liability for the decision.

Finally, what a doctor learns examining a patient improves how she reads the scan. What a lawyer learns researching the law improves how she advises the client. When doing task 1 makes you better at task 2, and vice versa, separating them destroys a productive feedback loop.

Market Entry

There is a fourth implication that the paper flags. Unbundling does not just change the revenue share — it lowers entry barriers into the residual human role. In a bundled occupation, you need to be competent at both tasks to enter. Once task 1 is handled by AI, the market no longer asks whether you can supply the full bundle. It asks only whether you can supply what remains.

This is good news and bad news simultaneously. Workers who previously lacked the task-1 credentials to enter the occupation can now walk through a narrower door, but at the same time the same door that lets them in also lets in more competition, which depresses earnings for incumbents. The labor share not only has shrunk (the bundle is smaller), but now it has to be divided among more claimants.

The early empirical evidence fits. Humlum and Vestergaard (2025) link chatbot adoption to Danish administrative records and find task restructuring but null effects on earnings and hours — consistent with a world where most jobs are at least moderately bundled. Gathmann, Grimm and Winkler (2024) find AI shifts the task content of German jobs with small displacement effects. Bundling tasks into a job works as a protection for the job itself.

What This Means for the AI Jobs Debate

The standard “AI exposure” tellings are not wrong, but miss the difference between tasks and jobs, ignoring whether the tasks AI can perform are independently extractable from the jobs that contain them. Two occupations can have identical exposure to AI and face opposite employment trajectories depending on the stickiness of their task.

This has profound implications for employers and policy makers. The question to be asked is not what AI can do, but what is lost when that task that can be automated is separated from the rest of the job. If the answer is “nothing much,” the model predicts that you will soon face a capacity shock — and the least-productive workers will bear the cost of it. If the answer is “quite a lot,” AI is more likely to function as an internal assistant, improving performance without redrawing the job boundary.

For workers, the diagnostic is equally concrete. The question is not “can AI do what I do?” but “can AI do part of what I do without the rest becoming worthless?” Radiologists, it turns out, are not safe because image classification is hard for machines. They are safe because image classification without the clinical embedding, the institutional accountability, and the diagnostic conversation is a different and lesser product.

Conclusions

The model offers a sobering final note. Strong bundles are not permanent bundles. As Figure 1 shows, rising AI capability pushes both regime boundaries upward — expanding the unbundling zone. For any given coordination cost c, there is a level of machine capability at which even a previously strong bundle tips into the interior regime and eventually into full unbundling.

The interesting empirical question then is not just “which occupations are exposed?” but “which occupations have enough coordination cost to delay the shock — and for how long?”

References

- Acemoglu, Daron, and Pascual Restrepo. “Low-skill and high-skill automation.” Journal of Human Capital 12.2 (2018): 204-232.

- Acemoglu, Daron, and Pascual Restrepo. “Automation and new tasks: How technology displaces and reinstates labor.” Journal of economic perspectives 33.2 (2019): 3-30.

- Garicano L., Li J., Wu Y. (2026). “Weak Bundle, Strong Bundle: How AI Redraws Job Boundaries.” Working paper, London School of Economics / University of Hong Kong.

- Gathmann, Christina, Felix Grimm, and Erwin Winkler. AI, task changes in jobs, and worker reallocation. No. 11585. CESifo Working Paper, 2024.

- Humlum, Anders, and Emilie Vestergaard. “The unequal adoption of ChatGPT exacerbates existing inequalities among workers.” Proceedings of the National Academy of Sciences 122.1 (2025): e2414972121.

Emanuele Bracco